Running Docker Flow Proxy With Automatic Reconfiguration¶ Docker Flow Proxy running in the Swarm Mode is designed to leverage the features introduced in Docker v1.12+. The examples that follow assume that you have Docker Machine version v0.8+ that includes Docker Engine v1.12+.

Disclaimer: all code snippets below require Docker 1.13+ TL;DR Docker 1.13 simplifies deployment of composed applications to a swarm (mode) cluster. And you can do it without creating a new dab ( Distribution Application Bundle) file, but just using familiar and well-known docker-compose.yml syntax (with some additions) and the -compose-file option. Read about this exciting feature, and try building, testing and deploying images instantly. Swarm cluster Docker Engine 1.12 introduced a new swarm mode for natively managing a cluster of Docker Engines called a swarm. Docker swarm mode implements and does not require using external key value store anymore, such as.

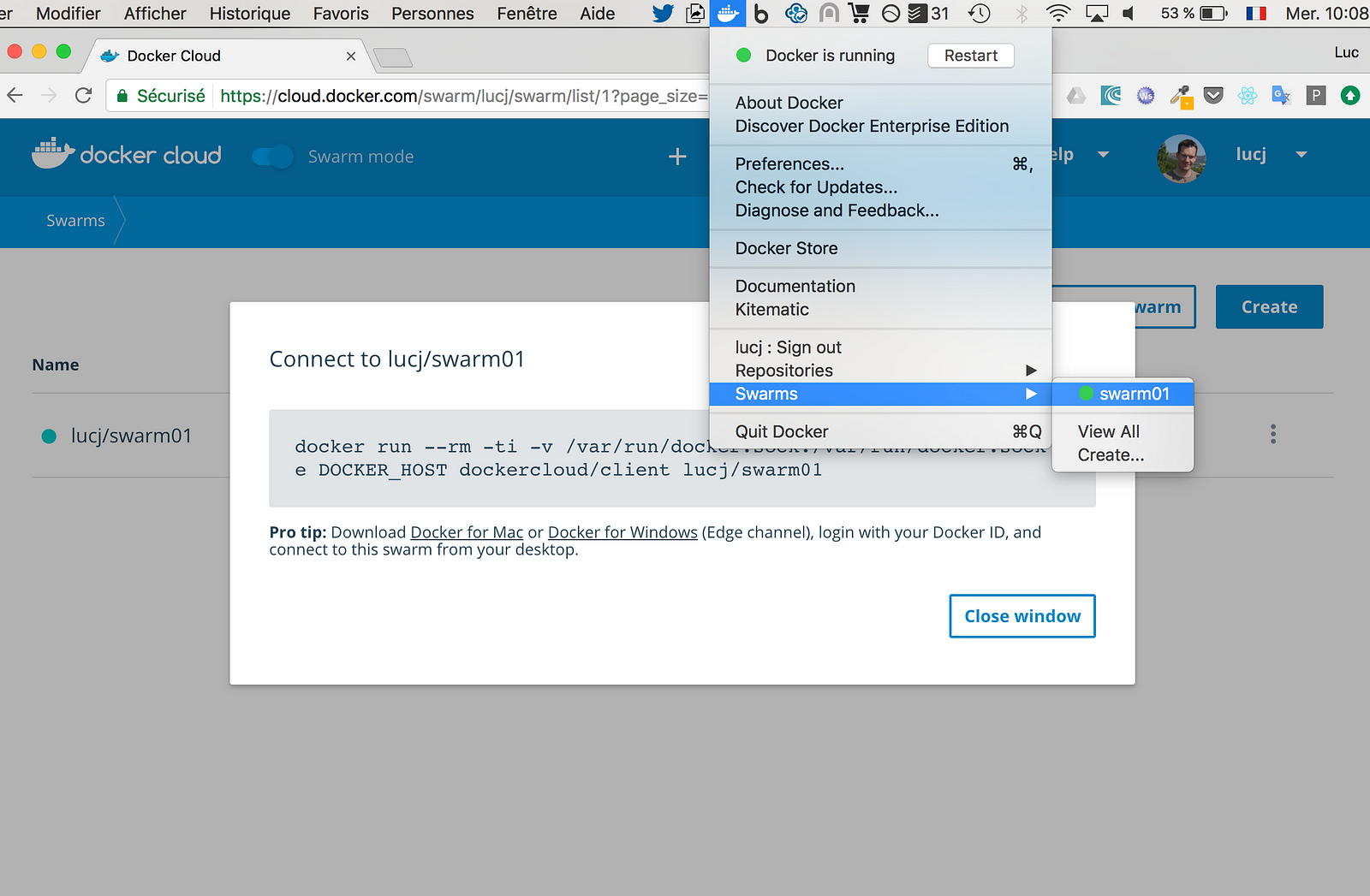

If you want to run a swarm cluster on a developer’s machine, there are several options. The first option and most widely known is to use a docker-machine tool with some virtual driver (Virtualbox, Parallels or other). But, in this post, I will use another approach: using Docker image with Docker for Mac, see more details in my post. Docker Registry mirror When you deploy a new service on a local swarm cluster, I recommend setting up a local Docker registry mirror so you can run all swarm nodes with the -registry-mirror option, pointing to your local Docker registry. By running a local Docker registry mirror, you can keep most of the redundant image fetch traffic on your local network and speedup service deployment. Docker Swarm cluster bootstrap script I’ve prepared a shell script to bootstrap a 4 node swarm cluster with Docker registry mirror and the very nice application.

The script initializes docker engine as a swarm master, then starts 3 new docker-in-docker containers and joins them to the swarm cluster as worker nodes. All worker nodes run with the -registry-mirror option. Manomarks / visualizer: beta Deploying multi-container application – the “old” way The Docker compose is a tool (and deployment specification format) for defining and running composed multi-container Docker applications. Before Docker 1.12, you could use docker-compose to deploy such applications to a swarm cluster. With 1.12 release, that is no longer possible: docker-compose can deploy your application on single Docker host only.

In order to deploy it to a swarm cluster, you need to create a special deployment specification file (also knows as Distribution Application Bundle) in dab format (see more ). To create this file, run docker-compose bundle. This will create a JSON file that describes a multi-container composed application with Docker images referenced by @sha256 instead of tags. Currently the dab file format does not support multiple settings from docker-compose.yml and does not support options from the docker service create command.

It’s really too bad, the dab bundle format looks promising, but is useless in Docker 1.12. Deploying multi-container application – the “new” way With Docker 1.13, the “new” way to deploy a multi-container composed application is to use docker-compose.yml again ( hurrah!). Kudos to the Docker team!.Note: You do not need docker-compose. You only need a yaml file in docker-compose format ( version: '3').

Docker compose v3 ( version: '3') So, what’s new in docker compose version 3? First, I suggest you take a deeper look at the. It is an extension of the well-known docker-compose format. Note: docker-compose tool ( ver. 1.9.0) does not support docker-compose.yaml version: '3' yet. The most visible change is around swarm service deployment.

Now you can specify all options supported by docker service create/update commands, this includes:. number of service replicas (or global service). service labels. hard and soft limits for service (container) CPU and memory. service restart policy.

service rolling update policy. deployment placement constraints Docker compose v3 example I’ve created a “new” compose file (v3) for the classic Cats vs. Dogs voting app. Db - data: Run the docker deploy - compose - file docker - compose. Yml VOTE command to deploy my version of “Cats vs.

Dogs” application on a swarm cluster. Hope you find this post useful. I look forward to your comments and any questions you have. Hope you find this post useful. I look forward to your comments and any questions you have. Now, it’s time to get you onboarded. Go on and to start building, testing and deploying Docker images faster than ever.

New to Codefresh? And start building, testing and deploying Docker images faster than ever.

The first thing I noticed reading through your Dockerfile is that you’re setting the host to use LOCALHOST as the address. However, what will happen within a docker stack is that your node will register incorrectly and not be reachable to your hub. This is because the reference to ‘LOCALHOST’ will just give the localhost of that container, and not anything identifiable to an outside service. The change you should make, in my opinion, is use HOSTNAME when setting the host of the node. This will take the container on which the node is running’s hostname and apply it there, which will allow the hub to communicate back to the node.

In your example you will see that the hub is continuously dropping that node as ‘unreachable’, and in my experience this is the cause. Of course, there is the possibility that I am incorrect, but, having set selenium grid up numerous times in stacks, I think this is probably sound advice.

Also, if you’re using the docker stack deploy commands, I’d think that this should be within the compose file, and not the Dockerfile, as your resulting image is much less portable with these variables defined within the Dockerfile.